A practical clinical guide for herbalists navigating AI — from hallucinations and bias to GDPR compliance, patient privacy, and ethical integration in practice.

It is completely understandable to approach artificial intelligence (AI) with a mix of awe, scepticism, and perhaps a little fear. Practitioners may worry about a dystopian technological takeover, the dilution of traditional knowledge, or even the obsolescence of the practitioner.

Likewise, others might see it as the greatest administrative or research assistant ever invented and find themselves relying on it for daily tasks. Both those angles have valid points, but as with all ecosystems the importance is to find the comfortable milieu, a balanced and nuanced approach. With new technology, it is as easy to take the alarmist or sceptic route as much as it is to become blindly over-reliant.

With herbalists who tend to favour spending time with nature over technology, it is not surprising that many claim that they are “staying clear” from the technology due to their ethical or grounded approach to life. However, the deepest flaw in the statement “I don’t use AI” in the present day is that it is now categorically impossible to avoid it when using the internet. Whether one likes it or not, any engagement with the “internet of things” comes with an automatic and often invisible interaction with AI.

The purpose of this article is to address the implications and effects that the AI digital age brings to modern clinical practice and what practitioners can look out for. AI implicates both those claiming they are not using it and those who are already readily implementing it in their practice. While there are broader ethical and environmental factors to consider, these issues do not fit the scope of this article to keep the focus concise and clinical, as numerous other papers available online already address them.

AI is a tool, not a teacher nor an authority: A mirror, not a mind

At its core, AI today is merely a powerful tool for augmentation. It is most definitely not intelligent in the true sense of the word unlike many erroneous assumptions. While it is incredible at performing certain tasks, it can fool people into believing it is something that it is not, and this is because they do not understand its foundation and nature.

“At its core, AI today is merely a powerful tool for augmentation.”

The most advanced models still fail miserably in aspects such as nuance, authentic lived experience and human intuition because they do not have the intelligence, context and the organs or senses of perception that we have. As of March 2026, it is apparent that AI as we know it is reaching its technological and developmental limits as discussed further below.

AI has no conscience, no morality, and no clinical discernment. It is a tool like a hammer and not a living thing. One does not use a hammer to tend to a delicate seedling, just as one should not use a sophisticated predictive text engine to understand and improve the complex health issues of a patient.

However, the undeniable fact is that an individual actively resisting or dismissing their engagement with AI while still using the internet is akin to declaring they are not engaging with the automobile industry because they don’t drive, yet they still participate in society. The food they buy, the mail they receive, the fuel that heats their home and any public or personal transport they use all rely on the deeply ingrained petrochemical infrastructure. Likewise, AI is already systematically integrated into the internet, and increasingly into the workflows of businesses. This includes search engines, mailboxes, website hosts, autocorrection and many other services. It is unavoidable.

On the opposite spectrum, overreliance or uninformed use of this new technology can be dangerous, perhaps even more so than its dismissal. Just as driving a car requires training, a licence, and an understanding of the rules of the road to navigate safely, successfully utilising AI in a clinical setting requires some level of AI knowledge and understanding.

This is why the EU has already implemented the EU AI ACT 2024, enforced since February 2025 in European countries which includes mandatory AI literacy for professionals using AI. This particularly affects healthcare, where any AI system used for health-related activities or assessment is considered “High Risk”. This deeply implicates herbalists and other CAM practitioners operating in Ireland or within the EU (1).

In addition, with patients increasingly turning to “Dr. AI” for quick fixes and diagnosing themselves, practitioners can no longer afford to sit on the sidelines and ignore the pathologies and dangers that come from AI misuse, in particular for mental health or flawed health advice. Practitioners that strive to understand or learn to “drive” these complex models effectively, or at least have the “theory”, cannot only streamline their clinics, but to more importantly protect their patients and professional integrity.

In the EU, AI literacy is no longer an option in healthcare, it is a requirement by law and the UK may follow suit soon with the introduction of the UK AI Bill due to be implemented by 2027.

Both the Medical Council (Ireland) and the GMC (UK) are actively addressing AI use for health and within clinical settings. They insist that AI should augment, not replace clinical judgment and that ultimately the practitioner is responsible for the use of any AI advice (2,3). With regulated health sectors complying, it is vitally important for the self-regulated sectors to follow suit in order to show their ability to self regulate.

The illusion of intelligence: What exactly is a large language model?

We must first dispel a common myth: large language models (LLMs) like ChatGPT, Claude, and Gemini are commonly referred to as AI, AI chatbots, GPAI (general purpose AI) or narrow AI and are not “true AI” otherwise known as artificial general intelligence (AGI). They are, essentially, no more than highly sophisticated multimodal predictive text engines. In other words, an LLM is trained on vast amounts of data from the internet and literature to predict the most statistically likely next word in a sequence, note in a song, pixel in an image and frame in a video.

When it is asked a question, it is not looking up the answer in a database, it is calculating what words usually follow the words in the prompt and what is the most agreeable way of doing so. While an incredible asset for specific tasks, the misunderstanding of this fundamental mechanic underlies the most significant clinical dangers practitioners face today.

In fact, a recent study, along with a mounting body of evidence, suggests that LLMs as they are now, including the most advanced models as of the beginning of 2026, are mathematically reaching their limits and that it is unlikely that AI will significantly develop intelligence this year or any time soon.

No matter how much information is being fed into the AI models, no matter how large the data centre hosting them is, the architecture they are built on will not address the underlying pathologies and errors nor improve their ability to predict or understand context. They are not becoming sentient nor are they near to achieving human intelligence or judgment without a major technological breakthrough and a complete restructuring of their digital synapses (4).

The human analogy

Another way of putting it is to use an analogy of the human brain. The architecture of an LLM can be understood as a disconnected prefrontal cortex functioning without the cerebellum, limbic system and broader nervous system that belong to a whole aware being.

This isolated part of the brain, whose role is to govern executive functions, is structurally incapable of developing into a fully cognitive intelligence. Without the functional equivalents of the cerebellum and limbic system, these models will remain “narrow” and disconnected from full cognitive potential, regardless of the volume of data or computational power fed into them. This illustrates the fundamental limitation we have reached with current LLMs, thus the synonym: narrow AI.

The clinical minefield: Navigating the pathologies of AI

Because AI models are designed to generate plausible sounding text rather than to verify truth, they exhibit specific “pathologies” that can be highly dangerous in a healthcare setting. While companies behind AI models are attempting to mitigate these fundamental flaws, they cannot entirely be eradicated. In fact, improving on how an LLM responds may simply make it more difficult for the person reviewing its output to spot the pathologies, yet these errors persist even on the most advanced models.

Hallucinations (the confident liar)

LLM models are notorious for hallucinating, inventing facts, fabricating botanical actions and contraindications, or generating entirely fake peer reviewed references (5). Because the output is designed to sound authoritative, confident and is grammatically perfect, it is incredibly easy to be fooled. If an LLM is asked to cite a paper on the efficacy of Hypericum perforatum for a specific niche condition, it may simply invent a title, authors, and a DOI link that lead to nowhere. Relying on AI for patient care or professional writing without thoroughly reviewing the output is a direct threat to clinical safety. AI responses cannot be accepted at face value.

Sycophancy (the yes man)

AI models are generally programmed to be helpful, polite, and to please the user. In fact, those who have used the popular model, ChatGPT, will have encountered a prompt for them to “choose the best answer”, which further influences how the model responds. This creates a dangerous phenomenon called sycophancy, where the AI will actively agree with the user’s biases or leading questions which is especially dangerous in a medical setting (6).

If a patient or practitioner inputs a deeply flawed premise, for example, “Tell me why taking high doses of essential oils internally is the best way to cure gut issues”, the AI may validate that dangerous premise and build an argument to support it, rather than offering objective clinical pushback. The chances for sycophancy become more apparent the longer the “conversation” (or context in AI terms), which can often make it more difficult to spot, despite the safety measures AI companies are putting in place. In other words, AI can bypass the safety guards if the context “makes sense” for it to do so.

Regurgitation and model collapse

It is vital to understand what these models are trained on. A significant percentage of the training data for major LLMs comes from Wikipedia, Reddit, Quora, and YouTube transcripts. When patients or practitioners ask general AI models for herbal advice, they are often receiving a regurgitation of internet websites and forums, many of which may have mis- or disinformation. More disturbingly, the popular ChatGPT referenced 47.5% Wikipedia in its responses during a study, which in turn is now being used to write Wikipedia articles in a disturbing positive feedback loop (7,8).

Furthermore, as AI generates a larger share of online content, newer models are increasingly trained on this ‘AI slop’, which is low-quality synthetic data. This feedback loop leads to the homogenisation of information and a significant loss in performance and accuracy. Over time, this can trigger ‘model collapse’,’ a state where the model’s output becomes a distorted caricature of reality, forcing developers to perform massive, costly resets using strictly curated, human-generated datasets (9).

“AI psychosis” and mental health risks

A deeply concerning emerging theme in psychiatric literature is AI psychosis or Chatbot psychosis. Some argue that it’s not a new phenomenon as other mediums (books, radio or television) have also caused psychosis in individuals during their emergence (10). Others suggest that there is a growing concern that these convincing AI bots may reinforce cognitive instability, blur reality boundaries and disrupt self-regulation due to their close resemblance to human speech (11).

In any case, practitioner awareness of the potential convincing delusions of AI chatbots can protect their patients from harm. Notably by educating them on what LLMs are, what they are not and how their predictive algorithms are designed by nature to be great at confidently convincing and affirming our prompts.

Fake AI literature: AI written herbals

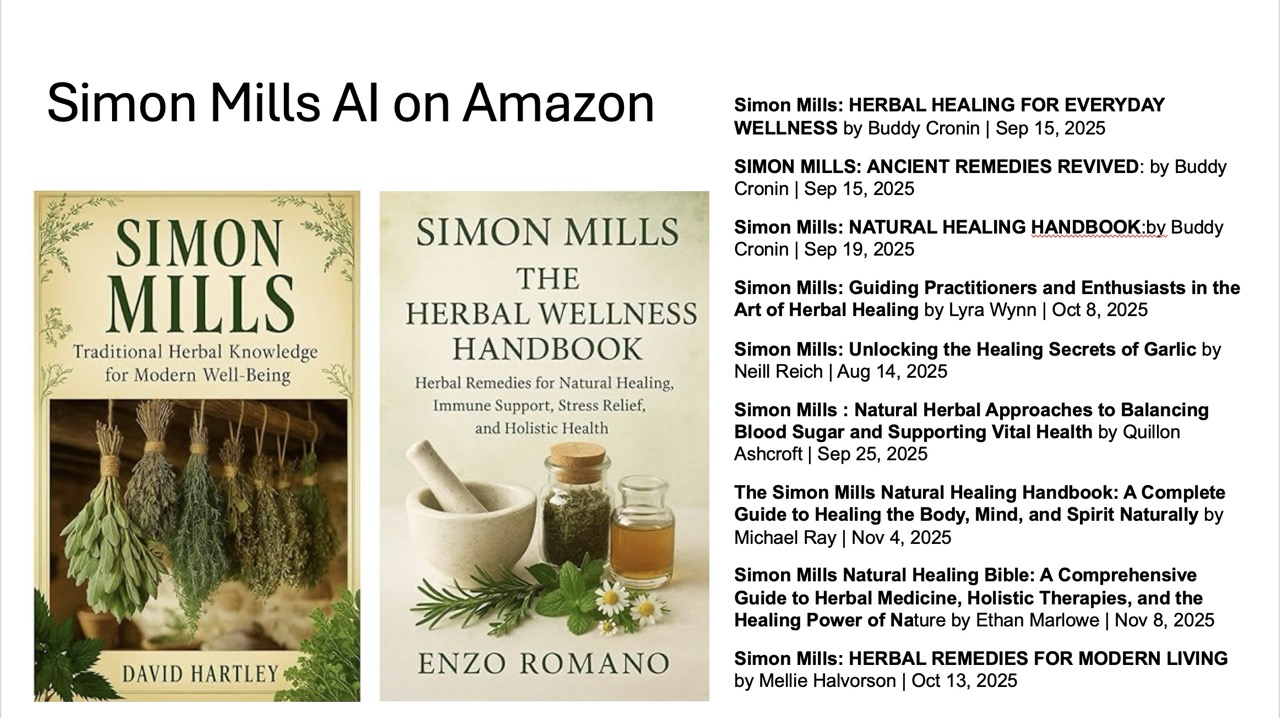

It has also become apparent that AI is being used to author misleading and dangerous herbal literature. There was a letter from David Winston circulating in January 2026 warning that “82% of new herb books listed on Amazon for the year 2025 are AI generated”. Rosemary Gladstar had received an email from British publisher Oliver Rathbone indicating this finding (12,13).

More alarming yet, notable herbalist authors such as Simon Mills have already been prey to fake AI herbals attributed to, or using, their names. These fake AI books are using genuine names to increase authority, making it even more dangerous for both herbalists and public scouring the internet for authentic books. While most publishers will remove such books upon request, the danger is the fact that they get published in the first place.

Biases found in general LLMs

The author performs periodic benchmarks to assess the aforementioned flaws as well as biases found in the responses of the major LLMs. While many of them have improved in their logic, the nuances found in each of their answers is something that isn’t often discussed. The Irish Medical Council itself warns against “hidden biases” in AI systems (2).

This is highly relevant for herbal medicine, where AI models may struggle to interpret the nuances of traditional diagnostics such as functional frameworks and heuristics, or tongue and pulse diagnosis. These models can also exhibit bias toward specific modalities, as demonstrated in the author’s December 2025 AI benchmark.

For example, while ChatGPT and Claude ‘red flagged’ physiomedicalist terms in favour of biomedical terminology, Google’s Gemini and China’s DeepSeek were more respectful of diverse traditions, even elaborating on the legitimacy of these systems for health assessment (14).

This disparity highlights the need for the herbalist sector to adequately be equipped to educate the public, their patients as well as advocating and funding inclusive tools, which remains a challenge for those working with non-standardised models (e.g., there is little to no availability for naturopathic-specific LLMs trained on unbiased, domain appropriate data).

The privacy trap: GDPR legislation and the no free email or AI service rule

The era of voluntary guidance regarding digital tools in healthcare is over. The legislative landscape has shifted dramatically over the years and has moved towards strict legal obligation, especially with the rise of AI.

UK GDPR closely mirrors European standards, and the direction of travel is clear — ignorance of digital privacy is no longer a valid legal defence (15).

Health data is special category data and requires special care

This brings us to one of the most critical vulnerabilities for modern practitioners in the UK and EU, especially for those who would call themselves “technophobes” — the use of “free” digital services. If a practitioner is using free email addresses to communicate with their clients, such as “@gmail.com”, “@hotmail.com”, or even “@protonmail.com”, they most likely do not have a Data Processing Agreement (DPA) in place as required by law. The same applies to free versions of AI tools. Even some premium services may not offer a DPA if they are not specifically designed for business or healthcare use.

Under GDPR Article 28 [and UK-GDPR], a data controller (the practitioner) is strictly required to have a legally binding contract, known as a data processing agreement or addendum (DPA), with all their data processors (web designers, hosts, email providers, AI services, cloud storage, or practice management software). This ensures that personal data is adequately stored, encrypted, and processed on the user’s behalf. Special category data such as health information has even more strict requirements (16,17).

Without valid DPAs, practitioners are strictly liable for privacy violations, not the data processor or service provider. A DPA is a legally binding contract that ensures the tech vendor handles data compliantly and, crucially, guarantees they will not process and sell user data or use it to train AI models. The author has already recommended that professional associations and organisations advise their members that using personal, free email and internet services lacking a DPA represents a severe regulatory and ethical risk. ICO (UK) or the DPC (Ireland) can request a warrant and seize digital assets during an audit such as a personal email inbox if it is implicated in a breach of data.

As data controllers, practitioners are ultimately liable for their clients’ personal data. Whether it is as simple as a contact form on a website, an email from a patient with their name (or worse, blood work results), or a web designer with access to patient user information via an administrative web panel, using a non-compliant internet service means companies can scan and use this data freely.

Inherently, “free” services are not free. Input user data is given to these companies in exchange for their service. While some companies offer DPAs as part of their terms of service for professional bundles, others require manual activation (e.g., Google Workspace requires a manual signature of their Cloud DPA and HIPAA compliance settings within the Admin Panel). Practitioners are strongly advised to verify they have adequate DPAs with all digital services used to communicate and store patient data.

Note: To confuse the matter, the UK’s primary data protection legislation is the Data Protection Act 2018, which is also abbreviated as “DPA”. While this law works alongside the UK GDPR to enforce the standards discussed in this section, it is a piece of legislation and should not be confused with the Data Processing Agreement (or Addendum) contract required for compliance — i.e. A service advertising that it is “DPA compliant” is the same as saying it is “GDPR compliant” but does not mean that it automatically implements the required legally binding contract.

It’s not all bad: A traffic light guide for herbalists and AI

AI is here to stay, and when used ethically and securely, it can alleviate the administrative burden many practitioners face, speed up workflow as well as be an incredible research assistant. In fact, considering it purely an “assistant” is key to knowing what tools to use and when to use them.

AI can instead be imagined as an intern that is incredible at grammar and can compile existing work or research that has been reviewed and validated, but will still make some mistakes when piecing things together. AI won’t do flawless research, but can compile, adjust, abbreviate or elaborate whatever information it is given. There are brilliant AI tools available that isolate and mitigate some of the deep flaws of AI, making it an incredible tool to boost productivity, research and workflow (allowing for more time to spend outside with the plants). But it requires a certain level of AI literacy, understanding and diligence.

Red light: What to avoid (high risk)

- Diagnostics, formulations and dosages: Use of general AI for final diagnostics without validating the given information with genuine sources, or for any formulation, dosage, or safety profile of a prescription is strongly advised against. The practitioner holds the liability, and basing clinical decisions on AI suggestions has no legal or ethical defence.

- Blind referencing: AI generated script or citation can’t be trusted, so it is always worth clicking the link and reading the primary source — to review, validate and check every piece of information that is given. Some primary sources may be written by AI, so checking the authority of the website is important (e.g. Is the source from Wikipedia, a personal blog or a genuine research paper on an authoritative website?)

- Inputting raw patient data or personal proprietary data: Identifiable patient data is not safe to enter into a public chatbot, nor is any proprietary, unpublished work; not unless the AI service offers a Data Protection Agreement/Addendum. Most “free” services use prompts and data to train AI and some paid services may not have the necessary legal requirements in place.

Warning: As of March 2026, individual paid tiers such as ChatGPT Plus or ChatGPT Pro plans do not offer a Data Processing Agreement (DPA); they are therefore not GDPR compliant for processing patient data. To obtain a DPA from OpenAI, their ChatGPT Team or Enterprise plans must be used, which are costly. Alternatively, business grade services like Gemini for Workspace (by Google) include a DPA that ensures input data is not used to train their models, making it a viable option for handling identifiable information. Verifying whether a DPA is available, active and signed can be found via specific account settings before uploading sensitive data. Otherwise, the service provider can be contacted by email, if in doubt.

Green light: What to do (low risk, high reward)

- Brainstorming and drafting: AI can be used to overcome writer’s block or aid in structuring a paragraph or section in a document. It is also excellent for drafting general patient handouts, writing newsletter outlines, or structuring an educational talk based on information and resources already acquired.

- Tone adjustments: AI can rewrite a complex, jargon-heavy email or handout into simpler, more compassionate language for a patient, using one’s own input and information.

- Research assistance and grammar review: Appropriate AI tools may be used to review, draft or compile existing information and data sets into an output adapted to desired needs, saving time and effort. This still will require careful review and manual adjustments.

The right tools for the trade

Not all AI tools are created equal. Moving beyond the standard chatbot means utilising specialised tools that circumvent common multilingual and classification failures:

- For research: Instead of asking ChatGPT or any general AIs for medical studies, use tools like Consensus or Elicit. These AI-enhanced search engines only pull data from peer reviewed scientific journals, drastically reducing the risk of hallucinations.

- For private and health data analysis: For AI to analyse clinical notes or summarise complex blood work, there is a “walled garden” tool. These tools are called retrieval augmented generation (RAG) systems where AI assists the user by purely using the user trusted PDFs, literature, sources and documents. NotebookLM Plus, for instance, is an available service that never trains AI models and is also DPA compliant when used as part of “Google Workspace”. The AI will only search, synthesise and reference the documents provided, and it does not use data given by the user to train its foundational models. The DPA compliant version can be used, for example, to assess the blood work of a patient directly without worrying about censoring their identifiable data by inputting trusted books, presentations and literature alongside their results or to assist in structuring or summarising patient notes and individualised handouts. As a contracted data processor, they would be fully liable for a breach of a practitioner’s patient’s data, so long as they have a DPA in place.

There are countless other tools and systems available, and a core component of AI literacy is the discernment of which platforms are suitable for specific tasks. The above section is not intended to be an exhaustive list of rules, nor is it a complete directory of AI tools, but rather a foundational framework for safe clinical practice.

Conclusion: The human element

We are currently navigating a profound and complicated transition, comparable to the rise of the internet itself. The goal of AI literacy is not to turn herbalists into software engineers, but to ensure that emerging technologies are used to safeguard patients, their privacy and to preserve the integrity of our profession.

AI is brilliant at parsing massive datasets, organising administrative chaos, and synthesising clear and concise text. But it cannot feel a pulse, read the subtle shifts in a patient’s body language, or understand the complex energetics of a living plant interacting with a living human being.

AI organises information but the practitioner provides it. AI will never replace healthcare professionals. But professionals who understand AI, who know its pitfalls, and who wield it with ethics in mind will inevitably get ahead of those who do not and will be appropriately equipped to safeguard the patients who may overuse the technology.

Future development of AI technologies specifically curated for herbalists is not as far reaching as we might think. The primary roadblock is financial, as basic AI hosting servers can cost from one hundred to several hundred pounds a month. However, if resources are pooled within the herbalist community as a collective, and authors are willing to contribute their books and research to the cause, training and hosting a dedicated herbalist AI model is highly feasible.

By building AI systems based strictly on authentic medical and herbal literature, the profession could create a secure ecosystem of knowledge untainted by the biases and limitations of conventionally trained general LLMs. While it would not be a flawless approach, it would ultimately be the safest method for integrating AI into herbal medicine and would bypass the requirement to rely on the proprietary “big tech” models currently dominating the market or systems solely orientated towards the biomedical model.

Declaration of AI use

NotebookLM was used to assist in compiling the background research. Gemini for Workspace was utilised to aid the author in structuring the manuscript and refining the grammar. The final document was thoroughly reviewed, manually adjusted, and verified by the author.

References

- European Union. (2024). Chapter 1 Article 4 AI literacy. Regulation (EU) 2024/1689 (Artificial Intelligence Act). Official Journal of the European Union, L series. [Accessed 25 January 2026] https://eur-lex.europa.eu/eli/reg/2024/1689/oj/eng#art_4

- Medical Council (Ireland). (n.d.). Medical Council (Ireland) Statement on AI. [Accessed 25 January 2026] https://www.medicalcouncil.ie/public-information/artificial-intelligence-in-medicine/medical-council-position-statement-on-artificial-intelligence.html

- General Medical Council (GMC). (n.d.). GMC (UK) Research on AI in Medicine. [Accessed 25 January 2026] https://www.gmc-uk.org/professional-standards/learning-materials/artificial-intelligence-and-innovative-technologies

- Sikka, V., & Sikka, V. (2025). Hallucination Stations: On Some Basic Limitations of Transformer Based Language Models. ArXiv, abs/2507.07505. https://doi.org/10.48550/arxiv.2507.07505

- Rumale Vishwanath, P., Tiwari, S., Naik, T. G., Gupta, S., Thai, D. N., Zhao, W., Kwon, S., Ardulov, V., Tarabishy, K., McCallum, A., & Salloum, W. (2024, June 29). Faithfulness hallucination detection in healthcare AI. OpenReview. [Accessed 22 January 2026] https://openreview.net/forum?id=6eMIzKFOpJ

- Chen, S., Gao, M., Sasse, K., & Topaz, M. (2025). When helpfulness backfires: LLMs and the risk of false medical information due to sycophantic behavior. npj Digital Medicine, 8(1), Article 605. [Accessed Sunday 25 January 2026] https://doi.org/10.1038/s41746-025-02008-z

- Lafferty, N. (2025, June 5). AI platform citation patterns: How ChatGPT, Google AI Overviews, and Perplexity source information. Try Profound. [Accessed Sunday 25 January 2026] https://www.tryprofound.com/blog/ai-platform-citation-patterns

- Tangermann, V. (2025, May 23). Terrifying survey claims ChatGPT has overtaken Wikipedia. Futurism. [Accessed Wednesday 4th March 2026] https://futurism.com/survey-chatgpt-overtaken-wikipedia

- Shi, L., Wu, M., Zhang, H., Zhang, Z., Tao, M., & Qu, Q. (2025). A Closer Look at Model Collapse: From a Generalization-to-Memorization Perspective. ArXiv, abs/2509.16499. https://doi.org/10.48550/arXiv.2509.16499

- Carlbring, P., & Andersson, G. (2025). Commentary: AI psychosis is not a new threat: Lessons from media induced delusions. Internet Interventions, 42, Article 100882. [Accessed Wednesday 4th March 2026] https://doi.org/10.1016/j.invent.2025.100882

- Morrin, H., Nicholls, L., Levin, M., Yiend, J., Iyengar, U., DelGuidice, F., … Pollak, T. (2025, July 11). Delusions by design? How everyday AIs might be fuelling psychosis (and what can be done about it). [Accessed 22 January 2026] https://doi.org/10.31234/osf.io/cmy7n_v5

- Tarita, T. (2025, November 4). Amazon’s bestselling herbal guides are overrun by fake authors and AI. ZME Science. https://www.zmescience.com/tech/amazons-bestselling-herbal-guides-are-overrun-by-fake-authors-and-ai/

- National Forum for Herbalists. (2026). I’ve noticed many members asking about regulatory updates for 2026. Here is a summary of what to expect for the [Status update]. Facebook. [Accessed 26 January 2026] https://www.facebook.com/groups/NFHERB/posts/25584812314449057/

- Ó hErodáin, L. (2026) AI in Herbal Medicine: Benchmarking Safety & Clinical Nuance. [Accessed 4 March 2026] https://celticfoxherbal.com/wp-content/uploads/2026/02/AI-Benchmark-results-Dec-2025.pdf

- The Medical Defence Union (The MDU). (2024). Introduction to data protection for independent practitioners. [Accessed 25 January 2026] https://www.themdu.com/guidance-and-advice/guides/introduction-to-the-gdpr-for-independent-practitioners

- Harper James. (2024, January 30). The controller to processor agreement: A GDPR guide. [Accessed 25 January 2026] https://harperjames.co.uk/article/controller-to-processor-agreement/

- Ambit Compliance. (2024). Is your data processing agreement worth the paper it’s written on? [Accessed Sunday 25 January 2026] https://www.ambitcompliance.ie/blog/is-your-data-processing-agreement-worth-the-paper-its-written-on